#5521 closed defect (fixed)

Some ComputeShortPath 700ms simulation lagspike over 40 seconds

| Reported by: | elexis | Owned by: | wraitii |

|---|---|---|---|

| Priority: | Release Blocker | Milestone: | Alpha 24 |

| Component: | Simulation | Keywords: | regression |

| Cc: | Patch: |

Description (last modified by )

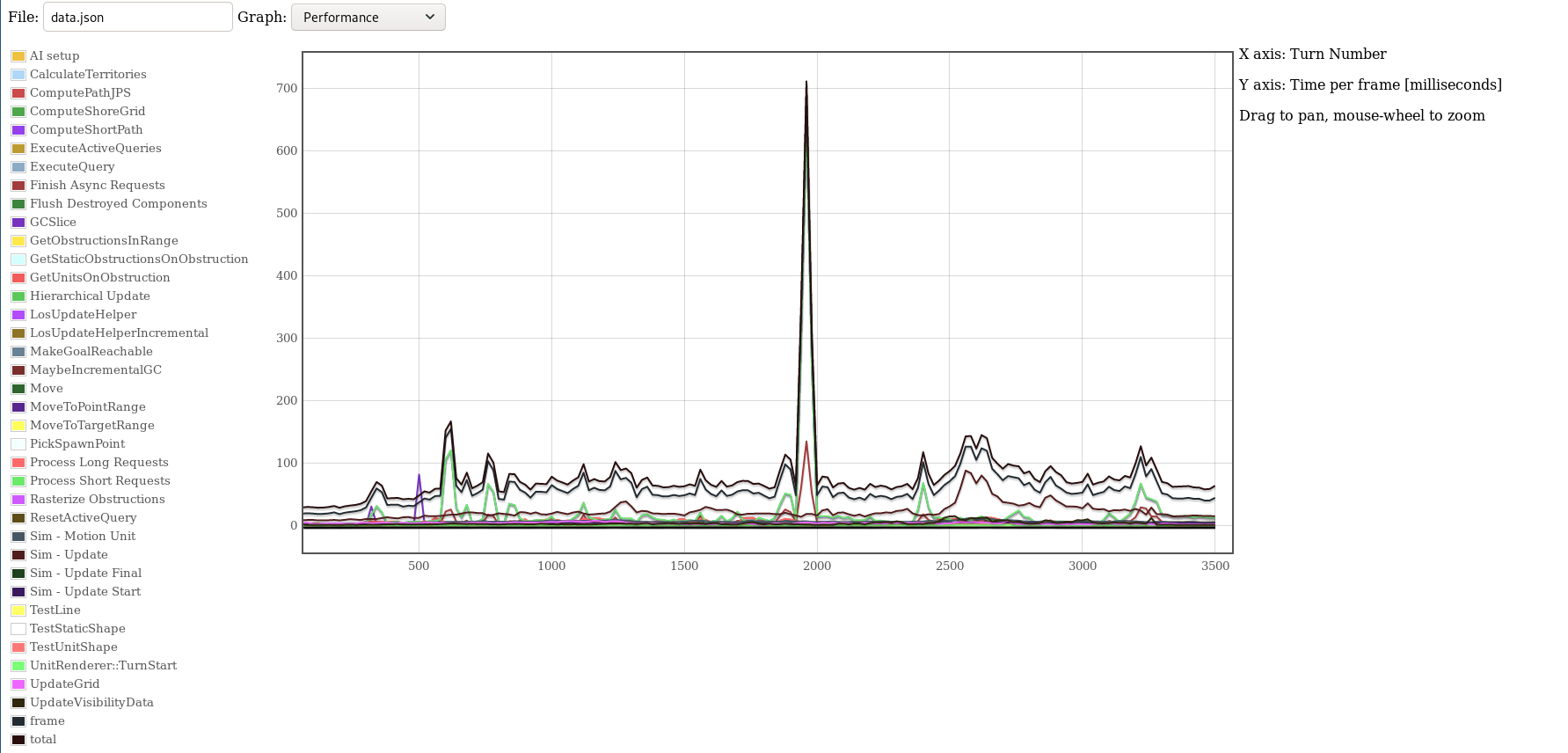

Between turn 1920 and turn 2000 in this r22504 replay, computing the turn took more than the average 80ms, even 5 times more than the otherwise maximum of 140ms - 700ms.

Notice that this is quite long - from 16:00 to 16:40.

The part that is slow is ComputeShortPath and Process Short Requests.

replay at https://code.wildfiregames.com/F1008256

profile1 json at https://code.wildfiregames.com/F1008316

What is particularly surprising is that there are not many units walking around, maybe < 200 that werent gathering?

Perhaps this isn't a regression, and perhaps it is a duplicate ticket.

In early 2018 temple looked a bit at lagspikes and mentioned that one type of lagspike occurs when every path is obstructed (all gates closed) and there was a gate-lock-command in this replay. (But does it have to be that bad for performance?)

Attachments (4)

Change History (10)

by , 5 years ago

| Attachment: | Screenshot from 2019-07-20 02-39-22.png added |

|---|

comment:1 by , 5 years ago

| Description: | modified (diff) |

|---|

comment:2 by , 5 years ago

by , 5 years ago

| Attachment: | Capture d’écran 2019-07-20 à 09.59.17.png added |

|---|

Non-visual replayy - Profiler2 of the same Turns

comment:3 by , 5 years ago

| Milestone: | Backlog → Alpha 24 |

|---|---|

| Priority: | Release Blocker → Must Have |

See attached screenshot for a profiler2 non-visual replay of the same.

As can be seen, several of these turns take over 800ms to compute. The cause is the few (~10) ComputeShortPath calls which are each taking 80ms.

Attached is a minimal replay of the same lag, and a screenshot of how the short-path must take many, many nodes into account.

This is a worst-case for the short pathfinder: none of the trees are AA squares, and the path is sometimes unreachable because of surrounding units. One fix in UM would be to fail faster when walking (aka having a higher max-range). But the fundamental issue is that this is a worst-case for the short pathfinder and we can't do much against it here. Probably unit-pushing would help in this case as we would not be computing as short path.

I'm downgrading this to "Must Have" - It might happen, but it's fairly rare that maps generate such big forests that aren't completely impassable. The UM issue of trying to go somewhere unreachable I plan to alleviate somewhat downstream by failing earlier when the order is walking or such.

--

A 'map' fix would be to clump such trees close so that units can no longer enter them, which would probably help.

comment:4 by , 5 years ago

| Owner: | set to |

|---|---|

| Priority: | Must Have → Release Blocker |

Re-upgrading to Release Blocker, as this actually doesn't seem to happen on A23 (or at least much more rarely, from my testing).

The 'good' news is I tried reverting r22473 and it didn't fix the lag, so that bug fix commit can stay. Iit appears in general to be more tied to how UM no longer fails so early, thus repeatedly computing short paths when the goal is unreachable, whereas before with "ShouldConsiderOurselvesAtDestination" it didn't.

Gonna try a few things and see how I can mitigate this, possibly I'll just have to do the 'more messages' thing I had in mind.

comment:6 by , 5 years ago

| Keywords: | regression added |

|---|

And without the patch in Phab:D2098 I wouldn't have found the part of the code that is triggering the lagspike (as either the Profiler2 UI capped it or I used it incorrectly).